Star sizes in the universe: can we have a recount, please?

When astronomers make statements on how many stars of different sizes there might be in our Milky Way and elsewhere, they don’t simply count them – they use an estimate. Their estimates are based on models that have been developed and then checked with reality. One such model is the Salpeter function, first derived in 1955 by Edwin Salpeter for the area around the Sun. It establishes a relationship between the initial masses of young stars and the masses of the cores from which they were created. Accordingly, there should be significantly more low-mass stars than high-mass stars, which would then also affect the distribution of the final products in the universe, that is, black holes and neutron stars.

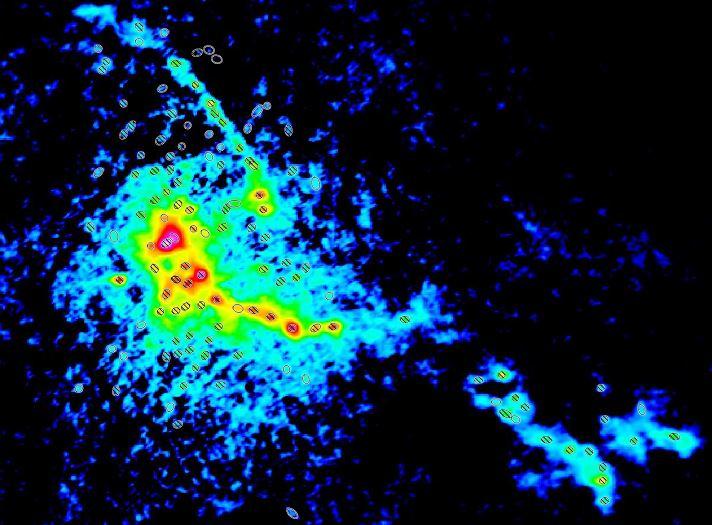

A new international study has now shown that previous assumptions might be wrong. The problem is that, for the data comparison, primarily molecular clouds of relatively low density were selected within the Milky Way. They are definitely easy to see (thus they can be easy to count), but they are not at all typical for star-formation areas. Using the radio telescope ALMA to measure the molecular cloud W43-MM1, scientists have now selected a significantly more distant, but more typical molecular cloud and determined the distribution of masses there. Surprisingly, it does not fit the Salpeter function. There are significantly more heavy stars there. For their next step, the team wants to analyze fifteen other similar clouds. If the conditions in those clouds are also different than previously assumed, then a few other models will have to be checked – this could mean, e.g., there are significantly more black holes in the universe than previously thought.